The “ChatGPT Moment” for Robots

In a major announcement at CES 2026, NVIDIA CEO Jensen Huang declared that we have reached the “ChatGPT moment” for Physical AI. While traditional AI lives inside screens and chats, Physical AI is designed to live in the real world—powering machines that can see, reason, and move just like humans do. To lead this revolution, NVIDIA has moved beyond just making chips. They have released a massive suite of open-source models and tools to help every company—from car manufacturers like Mercedes-Benz to robotics pioneers like Boston Dynamics—build smarter autonomous systems.

Quick Summary of the Big Moves

| Focus Area | Key Innovation | Impact |

| Self-Driving | Alpamayo Suite | Cars that can “explain” their driving logic in plain English. |

| Humanoid Robots | GR00T N1.6 | Advanced whole-body coordination for human-shaped robots. |

| World Training | Cosmos Platform | AI-generated “synthetic” videos to train robots safely in virtual worlds. |

| Hardware | Jetson T4000 | A compact AI “brain” that is 4x faster and more energy-efficient. |

1. Smarter Self-Driving Cars

NVIDIA has introduced the Alpamayo family of open-source AI models and tools to advance safe, reasoning-based autonomous vehicle development. This suite includes the Alpamayo 1 model, which is the first open vision language action model featuring chain-of-thought reasoning to help vehicles navigate complex, rare driving scenarios known as long-tail edge cases. By simulating humanlike judgment, the technology allows autonomous systems to think through decisions step by step and provide explainable logic for their actions. The ecosystem also provides the AlpaSim simulation framework and a vast collection of physical AI open datasets to support high-fidelity testing and validation. Industry leaders like JLR, Lucid, and Uber are already leveraging these tools to accelerate their level 4 autonomy roadmaps. By making these resources openly available on platforms like Hugging Face and GitHub, NVIDIA aims to foster transparency and rapid innovation across the global automotive research community to ensure safer and more scalable self-driving solutions.

2. Improved Brains for Robots

NVIDIA’s introduction of the Cosmos and GR00T N1.6 models represents a massive leap in how machines interact with the physical world by shifting from rigid programming to intuitive “World Models.” The Cosmos platform acts as a sophisticated simulator that generates highly realistic, physics-accurate videos, allowing robots to observe and learn how objects behave when touched or moved. This “synthetic” training is crucial because it allows a robot to practice complex tasks millions of times in a risk-free virtual environment before ever attempting them in a real factory. Building on this foundation, the GR00T N1.6 model specifically targets humanoid robots, providing them with the advanced whole-body coordination needed to balance, walk, and use human tools with precision. By combining visual reasoning with physical dexterity, these models enable robots to understand social cues and safety contexts, ensuring they can work alongside humans more naturally and safely than ever before.

World Foundation Models for Physical AI

Cosmos Predict

Predict future states of dynamic environments for robotics and AI agent planning.

This world generation model produces up to 30 seconds of high-fidelity video from multimodal prompts.

Cosmos Transfer

Accelerate synthetic data generation across various environments and lighting conditions.

This multicontrol model transforms 3D or spatial inputs from physical AI simulation frameworks, such as CARLA or NVIDIA Isaac Sim™, into fully controlled high-fidelity video.

Cosmos Reason

Enable robots and vision AI agents to reason like humans.

This multimodal vision language model (VLM) leverages prior knowledge, physics understanding, and common sense to comprehend the real world and interact with it.

NVIDIA Isaac GR00T N1: An Open Foundation Model for Humanoid Robots

NVIDIA’s introduction of the GR00T N1.6 represents a major shift in how machines interact with the world, moving from rigid code to intuitive “Vision-Language-Action” (VLA) models. This specific version acts as a sophisticated brain that processes visual data and natural language instructions simultaneously to control a robot’s entire body. Unlike previous versions, N1.6 uses a much larger “diffusion transformer” architecture—think of it as a deeper neural network—that allows for significantly smoother and more fluid movements. This allows human-shaped robots to perform “loco-manipulation,” which is the complex ability to walk and use their hands at the same time, such as opening a heavy door while stepping through it. By training on thousands of hours of diverse data, ranging from bimanual arm movements to full-body coordination, GR00T N1.6 can generalize its skills. This means a robot trained in a virtual warehouse can more easily adapt to a real-world kitchen or factory floor without needing to be reprogrammed for every new task.

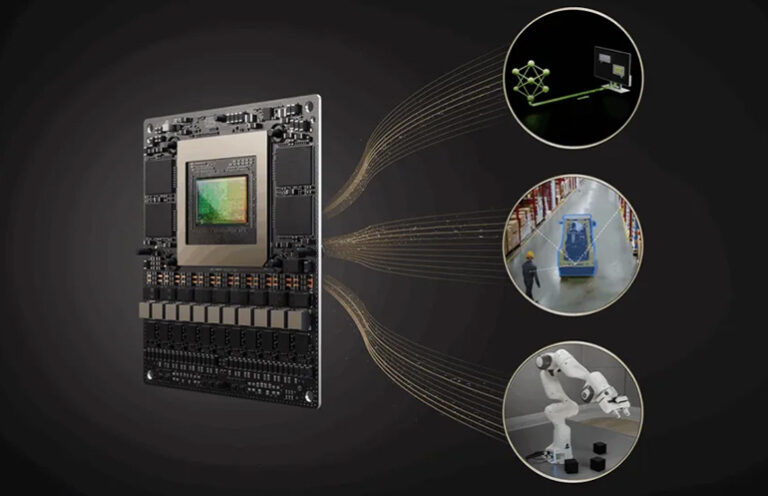

3. Powerful New Hardware

To provide the massive computing power required for these new AI models, NVIDIA launched the Jetson T4000 module, built on the cutting-edge Blackwell architecture. Priced at $1,999, this hardware is designed to be the “intelligent core” for the next generation of autonomous machines. It delivers a staggering 1,200 TFLOPS of AI performance, which is a 4x leap over the previous Orin generation, while maintaining a compact and energy-efficient 70-watt power envelope. This efficiency is critical for battery-powered robots that must process heavy generative AI and real-time sensor data—like LIDAR and multiple 4K cameras—without overheating or draining power too quickly. By bringing data-center-level “reasoning” capabilities directly to the edge, the T4000 allows robots to operate independently of the cloud, ensuring they can make split-second safety decisions even in areas with poor internet connectivity.

Reference